“Consistency in the process for examining patent applications benefits the entire patent community because it promotes more consistent and thus fairer outcomes.”

After a patent application is filed with the U.S. Patent and Trademark Office (USPTO), it gets assigned to an art unit and a patent examiner in that art unit who is responsible for reviewing the application, doing a prior art search, and determining whether to grant a patent.

After a patent application is filed with the U.S. Patent and Trademark Office (USPTO), it gets assigned to an art unit and a patent examiner in that art unit who is responsible for reviewing the application, doing a prior art search, and determining whether to grant a patent.

Examiners at the USPTO have expertise in specific technology areas, and to ensure a high-quality examination of a patent, it is important to match the technology of the patent to the expertise of an examiner.

The details of the assignment process are not important here, but the idea is that a patent application is “classified,” which means that codes are assigned to indicate the technological subject matter of the patent, and then an art unit and examiner are selected with expertise in that technology.

Recent Changes

In the past, this process was manual. People would review patent applications to assign classification codes, and then other people would determine the art unit and examiner to be assigned using the classification codes.

More recently, the USPTO is automating the assignment process. The assignment process is a great candidate for automation using machine learning, because large amounts of training data are available to train a machine learning model. Automating the assignment process has several advantages: lower costs, faster processing, and more consistent and likely better assignments of applications to art units and examiners.

Several companies provide tools that predict the art unit that will be assigned to a patent application after it is filed. Examples include Juristat, Patent Bots, PatSnap, and Serco. A patent practitioner can pay to use these tools to influence the art unit that will be assigned to a patent application after it is filed. The analysis here uses the Patent Bots tool.

The USPTO has stated publicly that it is transitioning to using more automation in the patent assignment process, and the improved accuracy of the art unit predictor tool provides independent confirmation.

The improved accuracy of the Patent Bots art unit predictor tool over the last two years shows that the USPTO is increasing the use of automation in assigning patent applications to art units. This conclusion is based on differences between manual processing by people and automated processing by machines.

People are much less predictable than machines. Asking different people to classify the same patent might result in different outcomes. Even asking the same person to classify the same patent on two different days might result in different outcomes. By contrast, when a machine does the classification, the outcome will always be the same.

Accordingly, when you try to predict what a person will do in assigning a patent to an art unit, the accuracy of the prediction will be lower because there is more randomness in what the outcome will be. But when you try to predict what a machine will do, the accuracy will be higher because the machine produces the same result each time. If the process is fully automated, then it should be possible to obtain near perfect accuracy since a machine learning model has the capability to learn even a complex decision-making process, as long as that process is consistent.

The Data

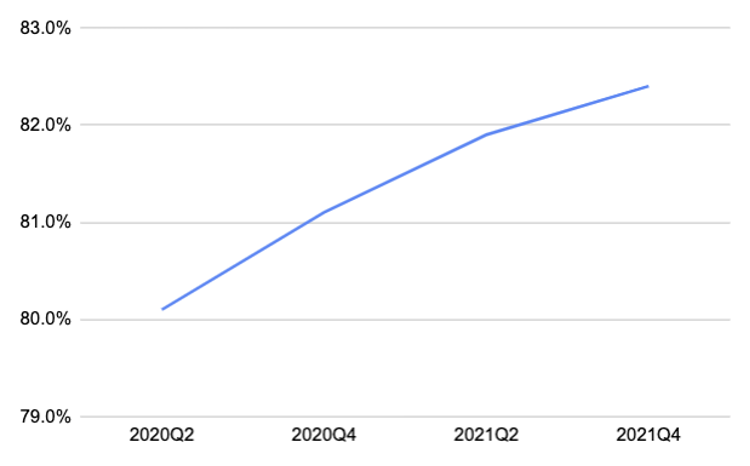

As the USPTO transitions the patent assignment process to replace decisions by people with decisions by machines, it should thus be easier for a third-party tool to predict the outcome. The following graph shows the accuracy of the Patent Bots art unit predictor over the last two years:

The art unit predictor tool predicts five possible art units and the accuracy here corresponds to one of the five predicted art units being the correct art unit. The accuracy numbers in the above chart are computed at six-month intervals over two years.

The implementation of the art unit predictor tool has not changed over this time period. The only change is the data used to train and test the art unit predictor tool. The accuracy of the predictor is clearly increasing over time.

The increased accuracy of the art unit predictor tool means that the USPTO is becoming more predictable in its process of assigning applications to art units. This is better for the patent system because similar applications will be assigned to the same art units and those art units will have (or learn) more expertise in those technology areas.

The Fully Automated Future

As the USPTO continues to automate the patent assignment process, the ability of third parties to predict the art unit assigned to an application will continue to increase. Where the USPTO process is fully automated, it may even be possible to have near perfect accuracy, including predicting the individual examiner that will be assigned to a patent application.

If and when the USPTO assignment process becomes fully automated, it is plausible that the USPTO might provide a public facing tool to allow all patent applicants to see the likely assignment of their application to an art unit or examiner. This would obviate the need for third-party art unit prediction tools.

Consistency in the process for examining patent applications benefits the entire patent community because it promotes more consistent and thus fairer outcomes. Automating the patent assignment process is one step in promoting consistency and I look forward to further advances in USPTO technology to increase consistency and fairness in the patent examination process.

Image Source: Deposit Photos

Image ID:380462812

Copyright:CYCLONEPROJECT

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Patent-Litigation-Masters-2024-sidebar-early-bird-ends-Apr-21-last-chance-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/2021-Patent-Practice-on-Demand-recorded-Feb-2021-336-x-280.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

6 comments so far.

Lisa Schreihart

March 28, 2022 01:12 pmAnon,

I won’t particularly comment on your questions as to the examiner union contract or whether some examiners could just rubber stamp. I suppose some could; probably many do their best to comply. It’s a new process, and with many new processes at any workplace, it sometimes takes a while to get everyone onboard, at least until they start seeing the benefit.

Lisa

Lisa Schreihart

March 28, 2022 01:08 pmAnon,

IPWatchDog has had Drew Hirschfeld in webinars a few times talking about the new AI and how examiners are helping to improve the classification of applications. Look at the IPWatchDog webinar list and you will see a few recent webinars.

Thanks,

Lisa

Primary Examiner

March 23, 2022 07:54 amLisa- good points, and I agree. The two tasks – reviewing the classification picture review and potentially having to initiate a challenge- have become quite common tasks for most Examiners. We are given a bit of time to complete these tasks. Most Examiners I’ve talked to prefer the old days, when the classification was done by contractors and was out of the Examiner’s hands. Depending on which area you examine, an Examiner can now spend a considerable amount of time performing these new tasks.

Anon

March 22, 2022 03:52 pmMs. Schreihart,

Where can I read more about this? It appears that any such ‘new tasking’ violates the union agreement that examiners operate under. What possible penalty would there be for examiners to either:

a) say “No” to this added work?

b) passive/aggressively ‘comply’ by saying they have done the work, but just rubber stamp (thereby creating possible Type I as well as Type II errors)?

Thanks,

Lisa Schreihart

March 18, 2022 12:30 pmAlong with the automation has come added examiner scrutiny: one more task in an examiner’s long list of tasks, to review the classification picture and perhaps file a classification challenge, internal to the USPTO, before the examiner starts examining. But to do that, the examiner has to spend some time looking at the application, which possibly may not stay with that examiner. Yes, I agree, as the AI gets better, examiners won’t have to do this, but it is now a new task, and it is not one that is fully embraced, as it takes time away from the usual examination process. In other words, some of the classification scrutiny that was previously done by contractors prior to assignment of the application to an examiner now has to be done by an examiner. Examiners are tasked to essentially help improve the AI. That’s the goal, anyway.

Louis Iselin

March 17, 2022 08:07 amThe graph has no error bars, so the “improvement” could just be noise.