“While the evolving capabilities of AI bring increased functionality and features, they also raise important legal considerations for parties negotiating software license agreements.”

As software products and services increasingly take advantage of the emerging capabilities of artificial intelligence (AI), software developers and companies that license software face evolving legal risks and contractual considerations. Software developers and licensees that fail to negotiate clear software license agreements that account for unique aspects of licensing AI-powered software may find themselves facing unexpected liability or costly software license dispute litigation. When drafting and negotiating software license agreements, parties should carefully consider the legal implications of developing and using software that incorporates AI.

As software products and services increasingly take advantage of the emerging capabilities of artificial intelligence (AI), software developers and companies that license software face evolving legal risks and contractual considerations. Software developers and licensees that fail to negotiate clear software license agreements that account for unique aspects of licensing AI-powered software may find themselves facing unexpected liability or costly software license dispute litigation. When drafting and negotiating software license agreements, parties should carefully consider the legal implications of developing and using software that incorporates AI.

Software developers and licensees encounter two common types of AI-powered software products implicated in licensing agreements: (1) software products that leverage third-party AI services hosted offsite; and (2) software products that leverage custom-built AI, deployed either in the cloud or within on premises infrastructure. Each type of AI integration gives rise to important strategic considerations and risks that stem from using AI services, including intellectual property rights, data privacy, security and breach concerns, gatekeeper responsibilities, service performance guarantees, and evolving legal and regulatory landscapes. In this article, we discuss important issues parties should consider when negotiating master service agreements (MSAs), statements of work, and other license agreements that involve AI. This article also offers insights and recommendations to proactively manage risks and negotiate favorable contract terms.

Software Products That Leverage Third-Party AI Services

Software companies rely upon powerful AI services from tech giants like Microsoft, Google, and Amazon to enhance their products with capabilities such as natural language processing (NLP) and predictive analytics. While incorporating third-party AI services can provide compelling features, professionals tasked with managing licensing of these assets should carefully consider potential risks associated with their use.

The following figure illustrates a scenario where a customer licenses software from a Software Vendor that leverages third-party AI functionality—in this example, an OpenAI large language model (LLM) running on Microsoft Azure.

In the above example, the Customer has a software license agreement that addresses the scope of the relationship with Software Vendor, as well as a separate agreement with Microsoft that addresses the scope of services provided by Microsoft Azure. On top of that, OpenAI publishes a list of representations and promises describing how it uses (or does not use) client data in connection with the OpenAI LLM. The Software Vendor, Microsoft, and OpenAI all provide some form of functionality relating to the software product licensed by the Customer, which includes handling and processing confidential customer data. This raises important considerations when licensing the software product, including allocation of liability relating to software functionality, responsibility for data privacy and security, and IP rights.

A. Errors or Damages Caused by Third-Party AI

Consider the following hypothetical. A healthcare software company that sells its products to hospitals and medical service providers uses a third-party AI model, like Google Vertex AI, to analyze medical images for early disease detection. Due to issues with how the AI model was trained, the software misclassifies thousands of X-rays—leading to numerous false positives, unnecessary patient anxiety, follow-up tests, and in a few cases, unneeded invasive procedures. Even if the healthcare software company includes a “reliance” clause in its license agreement—stating, for example, that the software provider cannot guarantee the accuracy of third-party AI services—a court may still impose a duty on the healthcare software company as an “informed intermediary” with specialized knowledge in AI and healthcare to protect end-users from known risks in AI technology. See Moll v. Intuitive Surgical, Inc., 2014 WL 1389652, at *4 (E.D. La. April 1, 2014) (holding that using a software product like a medical robot does not remove the software user / service provider from the scope of liability). By deciding to integrate a particular AI service, the healthcare software company could be seen as endorsing its capabilities. It is therefore critical that software developers not only include license terms addressing third-party AI functionality, but also carefully consider potential legal risks where special duties may attach.

B. Data Privacy and Security Risks

When a software product or service integrates with a third-party AI service, data flows in multiple directions—(1) from software vendor systems to AI, through their AI models, and back again; and (2) from software vendor systems to client systems, and back. This expanded data journey can increase privacy and security risks.

Looking at Microsoft Azure as an exemplar, Microsoft states that Azure OpenAI maintains strict data privacy and security measures for customer interactions. See Data, privacy, and security for Azure OpenAI Service. Microsoft also represents that customer prompts, completions, embeddings, and training data are kept confidential and are not shared with other customers, OpenAI, or used to improve any models or services. While Azure OpenAI handles prompts, generated content and data, Microsoft states that it does not use this information to automatically enhance models. Customers can fine-tune models with their own data, but these customized models remain exclusively available to the specific customer who created them.

In the hypothetical involving the healthcare software company, imagine if an authentication flaw in a third-party AI’s API allowed a hacker group to intercept the data stream, exposing thousands of medical images and associated protected health information (PHI). Claims of HIPAA/GDPR/CCPA violations and potential multi-million-dollar penalties from regulators are on the horizon. Even if an AI provider like Microsoft takes responsibility for the specific vulnerability, the healthcare software provider could still face liability on the basis that the healthcare company has a heightened duty to secure personal data through adequate vetting of third-party partners and end-to-end encryption. See e.g., In re Anthem, Inc. Data Breach Litig., 162 F. Supp. 3d 953, 1010–11 (N.D. Cal. 2016) (finding the plaintiffs could pursue breach of contract claims as third-party beneficiaries because the contract terms established that the defendant “could be held to privacy standards above and beyond the standards required under federal law”). If the operative service contract with the software vendor includes a clause representing that the software vendor will follow “industry best practices” for safeguarding PHI, this could impose further liability on a software vendor in this scenario.

C. IP Considerations

Beyond errors and security risks, software that relies on third-party AI also introduces potential complexities associated with protecting intellectual property rights. For example, poorly worded software license agreements may leave ambiguity over ownership rights to the AI model’s inputs and the outputs they generate.

Scenarios where copyrighted works are used to train AI LLMs to allegedly create infringing derivative works are already the subject of contentious litigation. See, e.g., Kadrey and Silverman et al. v. Meta Platforms, Inc., 3:23-cv-03417 (N.D. Cal., July 7, 2023) (plaintiffs allege that LLaMA’s “outputs (or portions of the outputs) are similar enough to the plaintiffs’ books to be infringing derivative works”). Considering the healthcare software company scenario described earlier, imagine that the licensed software utilizes AI services to generate data visualization charts and dashboards for medical service providers tailored to patient data. The AI provider could potentially exploit the software vendor’s proprietary code and the end customer’s confidential data to enhance its AI model for competitors of the software vendors and end customer. The AI provider might also assert intellectual property rights over outputs generated by the AI services, even when those outputs are derived using software vendor code and end customer data inputs. This could have a substantial impact on the software provider’s leverage in the competitive marketplace, and increases the possibility that confidential customer information is used without permission.

Infringement liability is also an important consideration. If the AI service is found to have infringed third-party IP rights through techniques like training-data scraping, the software vendor could be liable for resulting copyright violations.

AI provider terms and conditions regarding IP rights vary. For example, Anthropic lets its users “retain all right, title, and interest—including any intellectual property rights” in the input or the prompts. In addition, Anthropic disclaims rights to customer content and states that customers own all outputs generated, assigning any potential rights in outputs to the customer. However, Anthropic’s commitment not to train its models on customer content explicitly mentions only “Customer Content from paid Services” and is subject to customers’ compliance with Anthropic’s terms of service. See Anthropic’ s Terms of Services. Parties leveraging AI need to carefully consider implications relating to IP rights.

Software Products That Leverage Custom-Built AI, Either On-Premises or in the Cloud

Software products that rely on proprietary AI solutions deployed on-premises or in the software provider’s cloud can allow for increased flexibility and control over features, as well as greater control over access to confidential data. At the same time, the party responsible for providing and maintaining the underlying infrastructure that houses the AI services faces heightened risks relating to data governance, system integration, and product/service quality.

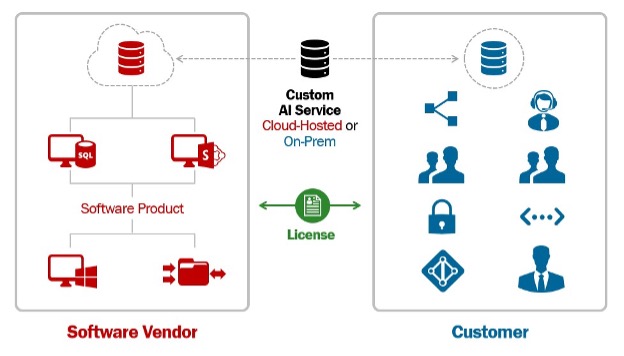

The following figure illustrates a scenario where a Customer licenses software that leverages custom-built AI functionality hosted either (1) on premises on Customer IT infrastructure or (2) in the cloud by the software vendor.

In the above scenario, the services responsible for providing AI functionality reside either in the Customer’s or the Software Vendor’s IT infrastructure. The location where the AI services reside is important, as the entity responsible for managing that infrastructure may incur “gatekeeping” responsibilities tied to the use of the AI service. This gatekeeping duty can carry significant liability risks. The arrangement and location of the AI functionality also raises important questions regarding performance guarantees.

A. Gatekeeper Role

Assume Software Vendor sells expense management software that uses custom-tailored NLP AI hosted on the Software Vendor’s cloud to scan invoices and automate payments. The NLP AI model ultimately misinterprets handwritten figures, causing a client to overpay a vendor by $5 million. While the Software Vendor could argue that their NLP API simply passed along raw outputs and it was the Customer’s responsibility to scrutinize those outputs before acting on them, a court could find that a “decision aid” technology vendor has a duty to implement appropriate safeguards and human oversight checkpoints. The fact that the AI services are hosted on the Software Vendor’s cloud heightens the risk of this potential outcome.

As another example, assume Software Vendor sells automated hiring and resume screening software that leverages custom-built AI hosted on-premises in the Customer’s IT infrastructure. This kind of tool should be designed to prevent illegal discrimination and bias from impacting hiring decisions. See Mobley v. Workday Inc., No. 3:23-cv-00770 (N.D. Cal, Feb. 21, 2023) (EEOC filed suit against human resources software firm Workday alleging that it violated federal anti-bias laws by using AI-powered software to screen out job applicants for racially discriminatory reasons). The Customer in this scenario needs to consider the risks associated with hosting and relying on automated software that leverages AI—which has known issues tied to generating responses that exhibit bias and errors. Customers utilizing such AI solutions should consider dedicated human oversight teams reviewing outputs for compliance with ethical guidelines.

Finally, assume Software Vendor sells software solutions to FinTech companies that use AI to detect financial crimes, payment fraud, and identity theft. The Customer—and potentially the Software Vendor, depending on the nature of the license agreement—may have a gatekeeping duty to validate AI outputs and correct false positives that stem from any racial or religious biases before freezing accounts or reporting individuals to authorities.

B. Service Level Agreements (SLAs) and Performance Guarantees

The transient, evolving nature of AI requires a more nuanced approach to uptime guarantees commonly included in service level agreements. Consider, for example, Software Vendor sells AI-powered software that monitors data centers, dynamically detects anomalies, and predicts system failures. Certain AI systems are susceptible to natural performance degradations over time that occur as real-world data distributions shift, deviating from those on which the static AI model was initially trained. If the Software Vendor provides guarantees for software uptime—commonly included in a service-level agreement—degradations on software performance caused by changes in third-party AI models could violate software uptime promises. In a potential legal dispute over breach of a service level agreement with uptime requirements, a court might conclude that for a product that touts AI as a key selling point over traditional algorithms, the AI-powered product must remain continually tuned and calibrated to maintain a reasonable level of predictive or analytical performance. For traditional software, uptime means computational availability, but for AI solutions, “uptime” might need to account for the availability of accurate, effective outputs from the AI models themselves.

Lessons Learned from Litigation—Best Practices

Software that leverages AI functionality often handles personal information, financial data, intellectual property, and other sensitive information. This raises important liability considerations for software vendors and companies that license AI-powered software. The following list offers some best practices for parties seeking to proactively manage risks when writing and negotiating software license agreements:

A. For Software Products That Use Third-Party AI

- Carefully scrutinize broad “as is” clauses for third-party components, as they may offer less protection than anticipated.

- Rigorously test any AI service before integration—and document these efforts.

- Negotiate stronger indemnification terms with third-party AI service providers, especially for enterprise clients.

- Identify and provide notice of functions that rely on external AI services, and clearly articulate limitations on capabilities.

- Clearly articulate IP ownership rights associated with AI-generated content, including ownership of inputs and outputs, as well as rights associated with trained AI models and use across different deployment environments.

- Regularly audit third-party AI performance, and provide customers with direct links to the third party’s performance metrics and incident reports.

- Ensure that any marketing materials accurately describe AI-related capabilities and limitations.

- Memorialize procedures for secure data storage, retention periods, and deletion processes.

- Ensure the AI system’s data practices adhere to data privacy laws like GDPR and CCPA, and update these practices as more jurisdictions put new laws in place.

B. For Software Products That Use Custom-Built AI

- Articulate whether AI software is hosted on-premises on Customer IT infrastructure or in the cloud by the software vendor, and detail responsibilities for data protection, security, and performance.

- Explicitly outline the scope of any gatekeeping responsibility over AI solutions to comply with legal and ethical requirements.

- Establish concrete metrics for “reasonable AI performance” that align with the parties’ expectations as well as known issues with AI performance, such as training data drift.

While the evolving capabilities of AI bring increased functionality and features, they also raise important legal considerations for parties negotiating software license agreements. As software incorporating AI becomes more common, disputes over software license terms are likely to increase. Software vendors and licensees alike should understand and carefully consider the risks associated with licensing AI software. Those unwilling to embrace this responsibility could face significant business and legal repercussions as the “move fast and break things” ethos collides with the general public’s demands for safe, reliable, accountable, and ethical use of AI.

Image Source: Deposit Photos

Author: bsd_studio

Image ID: 725301738

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2026/05/Ankar-AI-May-26-2026-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2026/05/Protege-in-PatentSight-spotlight-350x250-Ad-B.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2026/05/Juristat-May-28-2026-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2026/05/Patent-Masters-2026-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

No comments yet.