“We can’t be 100% certain of the trend’s cause, but it seems clear that patent error rate is on the decline.”

In an ideal world, issued patents would not contain errors. In reality, patent drafting is tedious and time-consuming work and perfection is not an attainable goal. The patent industry seems to be steadily getting better, though.

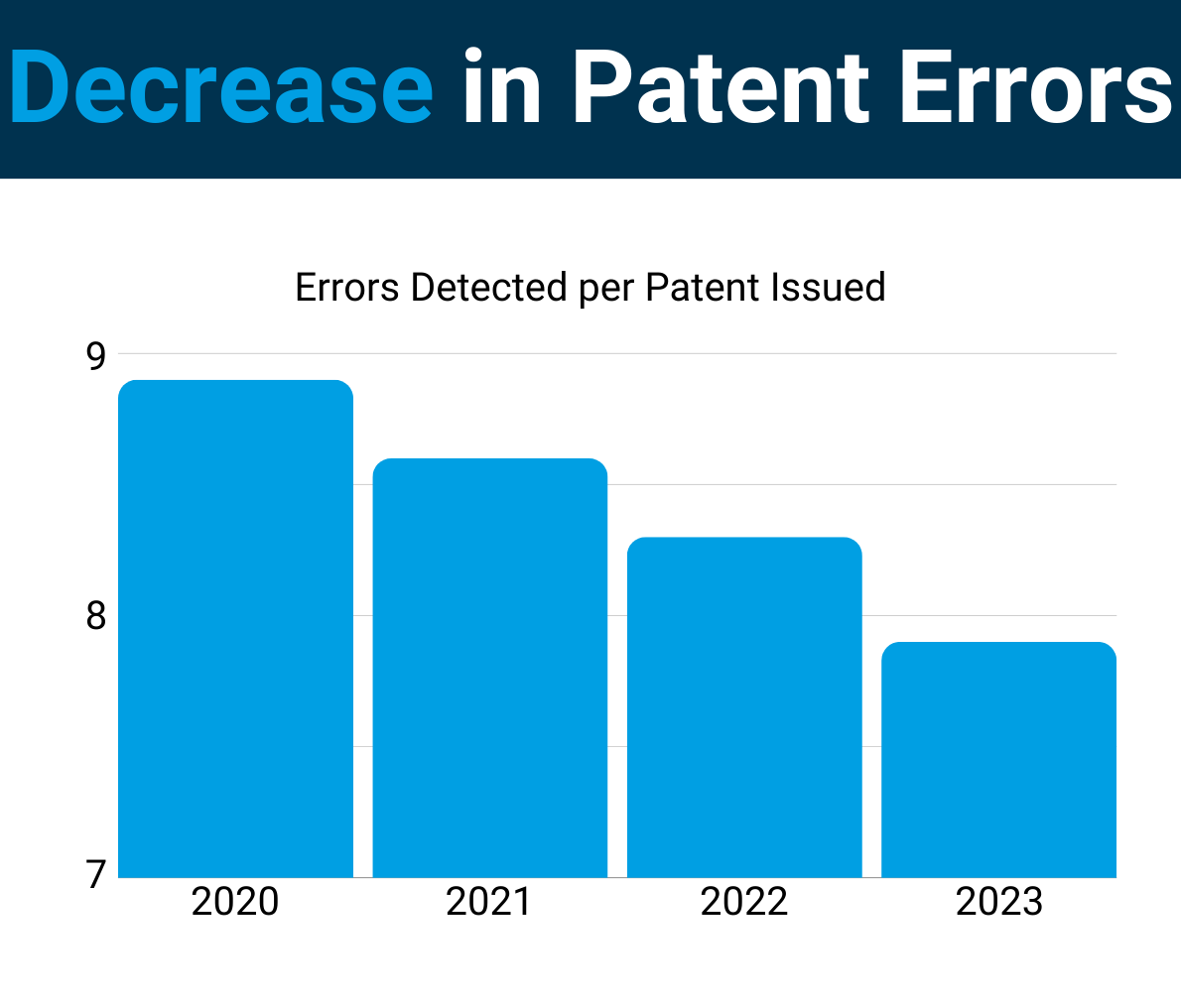

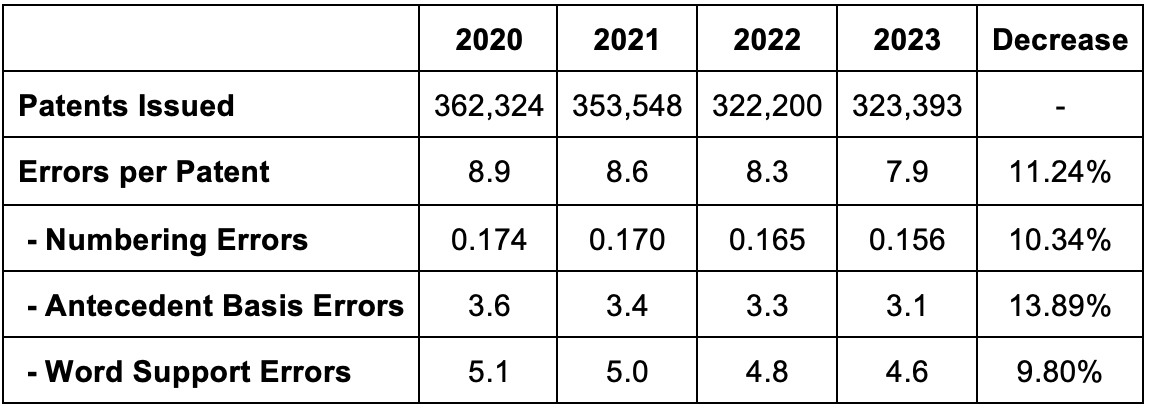

In a recent study, we uncovered an 11.24% decrease in errors per patent over the past four years. We observed this decrease by reviewing every patent issued by the U.S. Patent and Trademark Office (USPTO) since 2020 – nearly 1.4 million patents.

Here are the findings:

Temporary Dip or Long-Term Trend?

The steadiness of the decrease year after year across all three types of errors we track indicates a trend. This is not a one-time anomaly. And an 11.2% decrease in just four years is not a small dip. (It would have been interesting to extend our review as far back as 2010 or 2000. Unfortunately, the USPTO data format changes over time and existing tools cannot handle the older data formats).

What Types of Patent Errors are Detectable?

For this study, we focused on three types of errors – numbering errors, antecedent basis errors, and word support errors. These types of errors were selected because (1) they are important errors to avoid and (2) the software can reliably find these errors.

Note that the data only includes “red” errors in the software’s proofreading results. The “yellow” warnings were not included because the warnings are more subjective.

Numbering Errors: 10.3% Decrease Since 2020.

Numbering errors include both claim numbering errors (e.g., skipping a claim number or repeating a claim number) and dependency errors (e.g., a method claim depending from a system claim). Most (and maybe all) of the errors here are dependency errors.

The prevalence of these types of errors is small because they are easier for humans to find. However, they are decreasing as well.

Antecedent Basis Errors: 13.9% Decrease Since 2020.

When claims introduce a term, they should use an indefinite article (“a” or “an”). When claims later refer back to the same term, they should use the definite article (“the”). An antecedent basis error occurs where the claim has a term with “the” but no previous introduction of the same term without “the” was found.

Word Support Errors: 9.8% Decline Since 2020.

Word support errors are claim words where the word or a variant of the word (e.g., store, stores, stored, storing) was not found in the detailed description section of the patent. These are not actually errors, but in my view, it is a very bad practice for claims to use words that are not in the detailed description.

Attorneys Aren’t Perfect … And Neither is Automation.

It is important to note that proofreading tools are not perfect. Any proofreading tool will miss some errors and will mark some things as errors that are not actually errors. With modern machine learning technology, however, the accuracy of proofreading tools is quite high. Although the error counts for any single patent may not be exact, the tools give us a reliable overall view of industry-wide patent quality by reviewing an entire year of issued patents. Of course, we can’t be 100% certain of the trend’s cause, but it seems clear that patent error rate is on the decline.

Disclaimer: The author is founder of Patent Bots, a software tool that is the source of the data relied upon in this article.

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/UnitedLex-May-2-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Artificial-Intelligence-2024-REPLAY-sidebar-700x500-corrected.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Patent-Litigation-Masters-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/2021-Patent-Practice-on-Demand-recorded-Feb-2021-336-x-280.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

5 comments so far. Add my comment.

Julie Burke

February 18, 2024 06:51 pmHello Pro say, thank you for your interest! More to come!

To clarify, at least back in 2014, OPQA abbreviated reviews were predominately based upon the prior art of record. No additional searches were encouraged/permitted. Most of times, no new references were found/applied. A recent OIG report on examiner consistency documents a four hour QAS review period.

OPQA results do not measure whether examiners got it right 95.5% of the time when carefully considering the whole world of prior art.

As inventors find out, PTAB proceedings and court challenges are not limited to the prior art of record. Litigators can spend much more than four person-hours when considering the validity of the claims.

https://www.linkedin.com/posts/julie-burke-492264120_since-2020-patent-errors-have-decreased-activity-7164418777428819968-SlF5?utm_source=share&utm_medium=member_desktop

Pro Say

February 17, 2024 09:13 pm“In 4.1% of the surveyed allowances, the examiner missed a 102 and/or 103 rejection.”

Wrong way to look at this Julie.

Given that this means that 95.9% of the time, examiners got 102 / 103 correct.

That’s a strong “A” in anyone’s book. As it should be.

And this is especially so given the grey-area realm that is 103; where informed folks can — and regularly do — disagree.

All other gov agencies can only hope to do this well.

(This said, please keep your superb comments, insight, facts, and figures coming; as you in the great majority of the time hit the nail on the head.)

Julie Burke

February 17, 2024 09:10 amGotta tidy up those deck chairs on the Titanic.

In Fiscal Year 2022, the Office of Patent Quality Assurance (OPQA) found that 23.9% and 20.3% of non-final and final office actions failed to comply with the statutory requirements.

OPQA found that 7.1% of the allowances failed to comply with the statutory requirements. In 4.1% of the surveyed allowances, the examiner missed a 102 and/or 103 rejection.

But we can rest assured that those non compliant claims were correctly numbered…

https://www.linkedin.com/posts/julie-burke-492264120_uspto-oig-patentquality-activity-7153799050729820161-snze?utm_source=share&utm_medium=member_desktop

Julie Burke

February 16, 2024 07:34 pmInteresting work, Jeff O’Neill, thank you for sharing this data driven analysis.

As a former USPTO Quality Assurance Specialist, I believe that the important trends you have exposed is consistent with two alarming USPTO quality policy changes.

First, in 2010, USPTO management and POPA union hatched a plan to remove ~200 managers who oversaw the quality of the patent examiner’s allowances and rejections from management. These employees, yours truly included, were overnight placed in the POPA union and given the new title BUT-QAS.

Next, POPA and management restructured the QASs performance and appraisal plans so that less time and resources were available for quality reviews and less incentives were provided to QAS to find significant quality errors. If I remember correctly, QAS were given only ~4 hours to review an application.

Imagine automobile quality reviewers being given only enough time to check for a properly aligned hood ornament, four matching hub caps, and properly inflated tires. No time or encouragement to look under the hood, test the brakes and verify that the transmission was properly installed.

Moreover, the quality review was to be based only upon prior art of record – further searches discouraged. Given this regime, it is understandable that the QAS focused on “low hanging fruit,” technicalities that could be identified by quickly scanning the claims. With QAS performing cursory reviews, the perceived quality rate went up, I imagine more examiners were rated as commendable and outstanding, and the significance of the types of errors found declined.

If any major errors were found, we were not to further look into that examiner’s docket since the applications being reviewed should be selected randomly. No targeted reviews permitted.

Patent examiners are smart people. Because they know how their work is being reviewed, I am not surprised to see a greater focus on catching those pesky antecedent basis, claim numbering and word support errors.

https://www.linkedin.com/posts/julie-burke-492264120_bu-clarification-03dec2010pdf-activity-7138925545580367872-ldSg?utm_source=share&utm_medium=member_desktop

Second, when Congress asked the USPTO to address on-going patent quality concerns in 2021, POPA and USPTO leadership work together to revise the examiners performance and appraisal plan. The revised PAP was presented to Congress as being intended as a roadmap to improved patent quality. Jeff’s data even supports this notion. It all depends upon what one wants to measure.

https://ipwatchdog.com/2021/07/01/usptos-roadmap-improved-patent-quality-lead-lake-wobegon/id=135152/

The revised PAP downgraded major quality elements, such as determining compliance with 102, 103 and 112, to basic tasks, to be undertaken by entry level employees, without any advanced training or sound legal understanding. Even GS14 and GS15 patent examiners are evaluated under the basic criteria for the quality of their 102, 103 and 112 rejections.

Jeff’s exposure of US patent examiners’ increased diligence – catching technical errors that can easily be identified by cursory review performable by entry level employees – is exactly the reaction I would expect from the patent corps working under the water-down quality element of their PAPs.

Who cares if applicant’s supporting documents are written in Chinese, no English translation provided, as long as the 112 2nd type errors are caught in a four page office action.

https://www.linkedin.com/in/julie-burke-492264120/recent-activity/all/

Welcome, all, to the US Patent Registration Office, now churning out a steady pipeline of substantially unexamined patents ripe for PTAB invalidation.

Is Congress watching?

Josh Malone

February 16, 2024 02:06 pmYou have to run that by Google and Apple and their friends at the PTAB. They are the arbiters of errors. If they say a claim “would have been obvious” then it’s an error, regardless of what any other attorney, examiner, or judge has said.

Add Comment