“Municipal agencies, businesses and inventors must keep themselves apprised of the increasingly complex regulatory environment around facial recognition technology to ensure compliance.”

News coverage abounds about the latest breakthroughs in facial recognition technology. But, while this technology is an amazing technical achievement, it is not without potential drawbacks to privacy for those unwittingly subject to facial recognition in public. This includes the recent emergence of facial recognition technology paired with the large amounts of data available on the internet and social media through the scraping of images from numerous internet sources to provide an unusually powerful tool for uncovering the identity – including name, address and interests – of an individual through the use of just a single photograph. In response to these burgeoning technological advances in the field, cities and states have begun developing an array of legal approaches to regulate facial recognition technology, some scrambling to limit or prohibit its use, others enthusiastically embracing it. In this patchwork legal landscape, it can be challenging to know where and when the technology can be used – and for what purposes.

News coverage abounds about the latest breakthroughs in facial recognition technology. But, while this technology is an amazing technical achievement, it is not without potential drawbacks to privacy for those unwittingly subject to facial recognition in public. This includes the recent emergence of facial recognition technology paired with the large amounts of data available on the internet and social media through the scraping of images from numerous internet sources to provide an unusually powerful tool for uncovering the identity – including name, address and interests – of an individual through the use of just a single photograph. In response to these burgeoning technological advances in the field, cities and states have begun developing an array of legal approaches to regulate facial recognition technology, some scrambling to limit or prohibit its use, others enthusiastically embracing it. In this patchwork legal landscape, it can be challenging to know where and when the technology can be used – and for what purposes.

Facial Recognition and Privacy Concerns

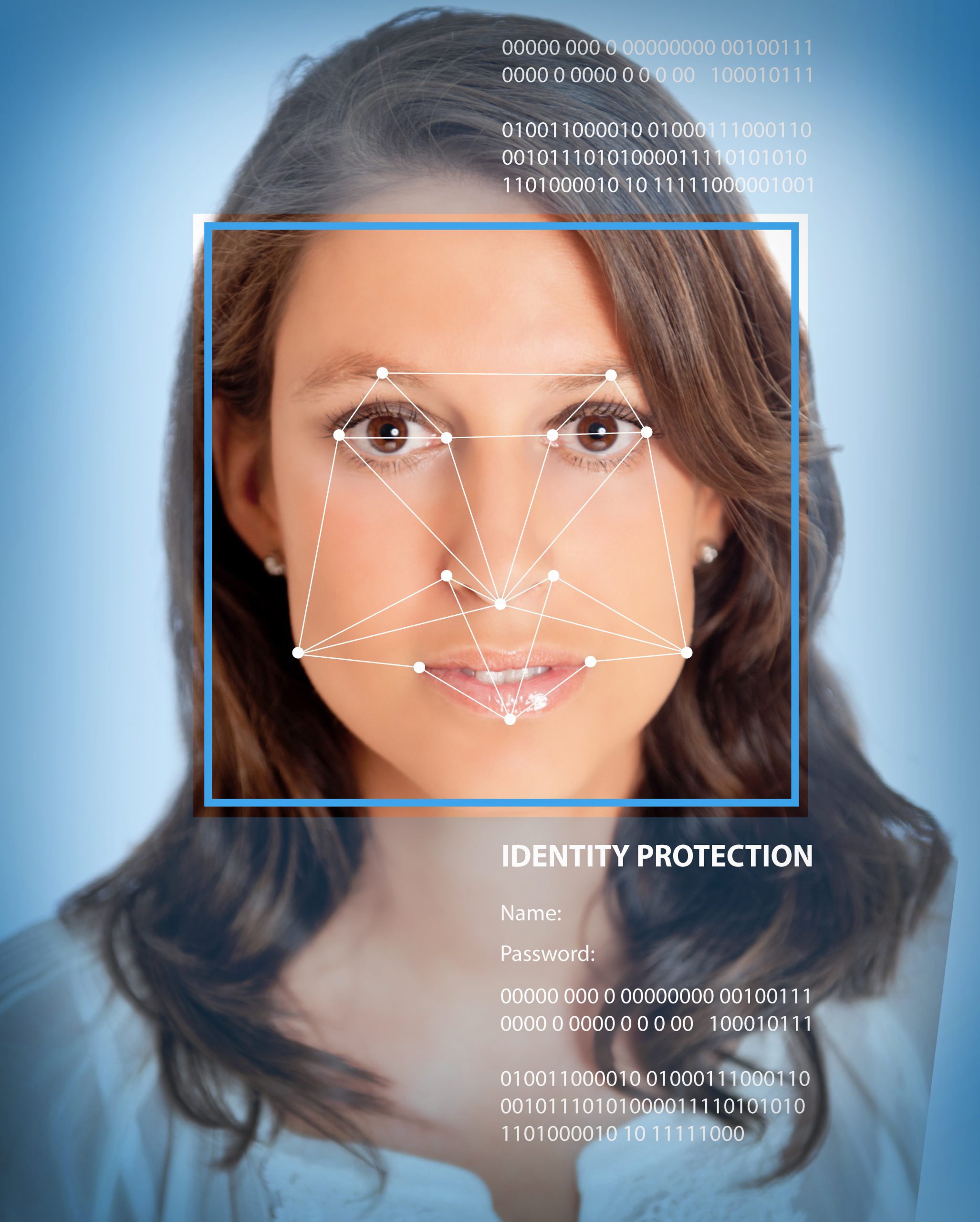

At its core, facial recognition technology consists of computer programs that analyze images of human faces and compare them against other images of human faces for the purpose of identifying or verifying the identity of the individuals in the images. The technology can be used for a myriad of purposes, such as identifying a smartphone user in order to unlock her phone, streamlining the check-in process at a hotel or rental car facility, enabling a customer to try out makeup virtually or sending a parent images of his / her child at school or day camp. The technology also has obvious surveillance and law enforcement uses, and concern about the privacy implications for use in these contexts has driven many jurisdictions to place limits on the use of facial recognition technology.

San Francisco and Oakland, California, Brookline, Cambridge, Northampton and Somerville, Massachusetts have all banned the use of facial recognition technology by city agencies. The city council in Portland, Oregon has proposed going a step farther, banning use of the technology in both the public and private sector to the extent the technology is or might be used for security purposes.

Law enforcement agencies in many other cities have also taken stances on the technology. Some, like the Seattle Police Department, have ceased to use facial recognition technology amid concerns about biased and inaccurate results. The Plano, Texas Police Department touts the benefits they reap from use of the technology, which they credit with solving numerous cases. Others, like the Detroit Police Department, permit the use of facial recognition technology only under certain conditions, such as when the technology seems reasonably likely to aid the investigation into violent crimes. At the state level, three states have banned facial recognition technology in police body cameras: Oregon, New Hampshire and, most recently, California.

Getting Consent

Laws regarding the use of facial recognition technology are not limited to the public sector. Several states have worked biometric information into their existing data privacy laws – or created new laws specifically geared toward biometric data collection. Illinois was the first state to address collection of biometric data by private businesses. Its Biometric Information Privacy Act (BIPA), passed in 2008, places significant limitations on how private entities can collect and use a person’s biometric data. It requires that a business obtain informed consent prior to the collection of biometric data. It also prohibits profiting off biometric data, allows only a limited right to disclose collected data, sets forth data protection obligations for business and creates a private right of action for individuals whose data has been collected or used in violation of the law — even if the individual is unable to show that he or she suffered actual harm from the violation. Illinois has seen numerous class actions premised on this law in the decade since the law has been in effect.

Texas law, like Illinois, requires individuals or companies who collect biometric data to inform individuals before capturing the biometric identifier and to receive the individual’s consent. But unlike the Illinois law, the Texas biometric privacy statute does not require a written release. The Texas law, like the Illinois law, does prohibit the sale of biometric information, and it likewise sets restrictions on how such information is stored.

Washington state’s biometric privacy statute took effect in 2017. Like the Texas law, it does not specify that consent to collection of biometric data be in writing, nor does it create a private cause of action against violators. Unlike its Illinois and Texas counterparts however, the Washington state law carves out an exemption to biometric data collection and storage; businesses may collect and store such information without providing notice and obtaining consent so long as the information is collection for “security purposes.” defined to include collection, storage and use of the information for purposes of preventing shoplifting, fraud and theft. The Washington state law also permits companies to sell biometric information under limited circumstances.

Most recently, California’s law applies on a somewhat more limited scale than the Illinois, Texas and Washington laws. The California law targets any company that both (1) operates in California and (2) either makes at least $25 million in annual revenue, gathers data on more than 50,000 users or makes more than half its money off of user data. The law treats biometric information, including images of ones face, as personal information, and provides rights to consumers to protect their personal information, such as by allowing consumers to obtain information about the collection and sale of their personal information and by allowing consumers to opt out of the sale of their personal information. Although California lawmakers contemplated requiring businesses to disclose their use of facial recognition technology by conspicuously posting physical signs at every entrance to any facility that uses facial recognition technology, the proposed legislation requiring such disclosure failed to garner sufficient interest to pass and has been ordered inactive.

Stay Engaged

Lawmakers in cities and states around the country continue to explore options to limit how the public and private sector utilize facial recognition technology. Municipal agencies, businesses and inventors must keep themselves apprised of this increasingly complex regulatory environment. If you live in an area considering such a law, you should participate in the process. If you operate a business in a state or city with such laws or collect data on individuals living in any of those areas, you must keep abreast of the changes in this field if you also collect biometric data. This might require any single company to carefully comply with a patchwork of different laws. It is an ever expanding and changing area of law and what might have been acceptable and legal previously could prove problematic or illegal in the future.

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/UnitedLex-May-2-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Artificial-Intelligence-2024-REPLAY-sidebar-700x500-corrected.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Patent-Litigation-Masters-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/2021-Patent-Practice-on-Demand-recorded-Feb-2021-336-x-280.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

One comment so far.

Anon

January 28, 2020 05:08 pmFor consideration: a machine that is capable of facial recognition (and acting on such) does NOT “capture your face,” but instead generates a logical construct based on gathered inputs.

Once the logical construct is obtained, then THAT logical construct (and not your actual face) is what is used to “open doors” as such.

How should such a logical construct be treated any differently — under the law — than any other construct for opening doors?